Out of service:

Consider the process of contacting support from the customer's perspective. The majority of people have had at least one bad experience with customer service. Usually, there is an issue that cannot be remedied without assistance, so the customer must seek the contact details for support, initiate communication, and wait for a response. It seems simple enough. However, this process can culminate in confusion, frustration, and impatience for the customer.

The thought of contacting customer support can be unpleasant for many people. There are a few frequent mishaps that guarantee to make the situation worse when it comes to customer service:

- Slow responses and long waiting times

- Overly complicated procedures

- Untrained staff members

- No follow-ups

With the convenience and accessibility of instant A2P messaging, communication channels like enterprise SMS, OTT messaging, Email-to-text, and live chat are highly practical solutions. The concise and immediate nature of chat support means that customers can get the answers they are looking for without the wait.

Businesses that absolutely failed at chat support—and one that completely owns it

Take a look at three 'horror story' examples of how online support can go so totally wrong.

Telstra's "virtual moron-idiot" chatbot

In 2018, Telstra customers blasted the company when it launched a support chatbot that reportedly failed to complete basic tasks. Codi, Telstra's virtual assistant, was unable to answer simple questions, differentiate between a person's name and geographic locations, or directly transfer users to a human agent.

— EH (@uggugg) February 22, 2018

Codi the virtual @Telstra assistant is the worst idea in the history of customer support. It is beyond useless, and only serves to make me angrier.

"Codi the virtual @Telstra assistant is the worst idea in the history of customer support. It is beyond useless, and only serves to make me angrier." - @uggugg

A Telstra spokesperson said, "Customers engaging with Codi can switch to engage with a live chat agent at any stage."

However, when asked how to chat with a human representative, the spokesperson could not answer. Instead, they encouraged customers to interact with human Telstra consultants by phone or in-store, and not via chat support.

"Codi isn't meant to replace people, it's a digital self-service channel provided as a further engagement option for customers ... If a customer wishes to speak to a consultant, we encourage them to visit a store or call our customer service teams on 13 22 00."

Telstra continued to justify that Codi is an AI that is still learning and "will improve over time".

Currently, Telstra has nine chatbots functioning on its systems, integrated into existing platforms both customer-facing and internal.

Amazon's "infuriating" live chat

CEO Jeff Bezos describes Amazon as "customer-obsessed" with a strong focus on customer service and satisfaction.

However, this was not the case for Chris Williams. In 2013, Williams had the unfortunate experience of interacting with an Amazon representative on live chat who appeared to not understand English.

Williams explained he was concerned about his account security and that someone named Brittni might be attempting a phishing scam. He asked that the email address similar to his own (but not his) be removed from his account. The representative, Farah, responded with a mixture of prewritten replies and poorly written English, referring to Williams as "maam" multiple times.

According to Williams, he spent almost an hour trying to resolve the issue. Still, it was apparent that Farah did not understand the initial request. Eventually, Farah offered to transfer the chat to an account specialist. However, after saying the conversation would be transferred to an account specialist four times, it never did.

Farah then asked for an apology from Williams, and called him 'Brittni'. After much frustration and miscommunication, Farah requested for Williams' phone number to pass on to a customer service representative to call him.

Ever since Williams published the whole chat transcript (see: here), this case has become a chat support cautionary tale for businesses and customers alike.

Bank of America's "cookie-cutter" Twitter responses

In 2013, Mark Hamilton drew an antiforeclosure message in chalk, condemning Bank of America. He took to Twitter to share that police instructed him to vacate the sidewalk outside the bank's Manhattan branch.

— darthmarkh (@darthmarkh) July 6, 2013

Just got chased away by #NYPD 4 'obstructing sidewalk' while #chalkupy-ing with @CyMadD0x outside @bankofamerica HQ pic.twitter.com/fXyI2JUQVv

The subsequent responses from Bank of America’s Twitter account appeared to lack a human touch, attempting to address bank account issues rather than the matter at hand.

Initially, it seemed that one of the world's largest banks had employed a Twitter bot – a program that sends automated replies to tweets that mention the company. However, this was not so.

"All of our interactions are personal and handled by a team of over 100 social-media servicing representatives," a spokesperson for the bank said.

"We respond to mentions of the bank to help identify underlying customer issues in addition to direct requests for help."

Although the mention of Bank of America on Twitter garners scripted responses, in this instance, the topic was quite a sensitive one. Without personalizing the response to Hamilton's tweet, the bank's Twitter account came across as disconnected and unconcerned. Instead, the cookie-cutter responses reiterated Hamilton's and other angry tweeters' view of the bank as a "faceless, heartless conglomerate."

And now, this.

Check out this example of a business providing tailored communication through its chat support services, and completely nailing it!

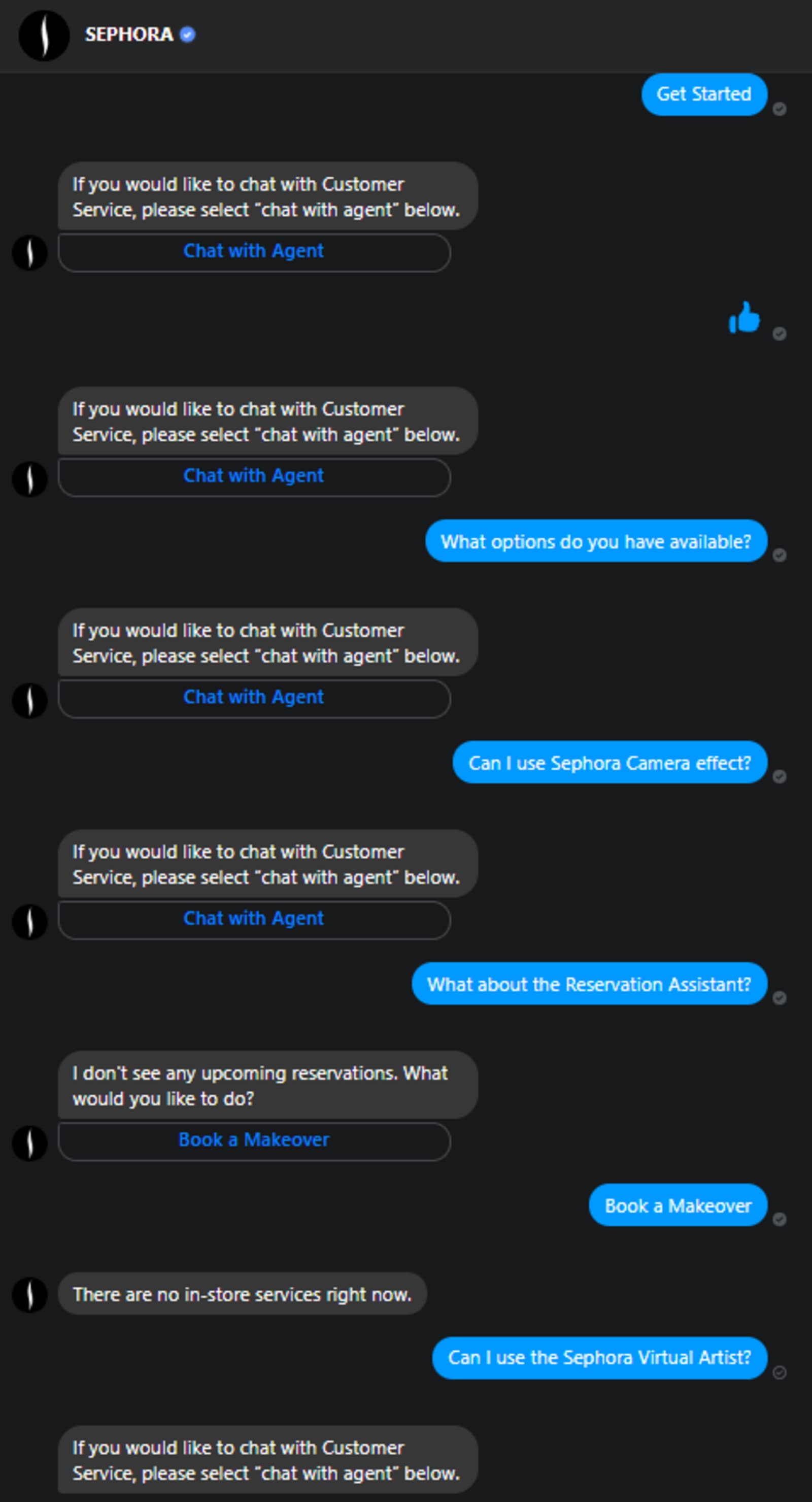

Sephora's personal assistant chatbots

Sephora has been praised for its Kik and Messenger communication strategy. Allocating both chatbots and human customer service representatives, it aimed to tailor each experience to individual customers.

Providing interactive assistance, Sephora integrated a Kik chatbot to help users with makeup tips, how-to videos, and reviews. As well as a Reservation Assistant, Virtual Artist, and Camera Effects as a part of its Facebook Messenger offerings.

As you can see below, Sephora's chatbots are currently not functioning at optimal levels.

The chatbot continually responded with, "If you would like to chat with Customer Service, please select 'chat with agent' below." And only responded with something different when I mentioned reservations. Which turned out to be a dead-end also.

While Sephora may not be offering its highly interactive chatbot services right now, that doesn't mean that its strategy is any less impressive. Sephora is an excellent example of tailored communication and implementing interactive chat support services.

These businesses absolutely failed at chat support, yet they have taken those mistakes and turned them into lessons. Those pitfalls now serve as examples and cautionary tales for all companies on how NOT to implement online support.

As we see in Sephora’s example, not only does it help to consider the ten 'what NOT to do 's', but also to be creative and tailor communication towards customers to enhance CX.

Vincenza is a Content Specialist at SMSGlobal. Based in Melbourne, she is a creative and driven go-getter who loves to engage with her audience through storytelling. When she isn’t fulfilling her calling as a wordsmith, she enjoys travel, live music, and eating delicious, delicious food. You can find her online here: LinkedIn - Facebook - Twitter - Instagram - Medium