Words have the power to bring imagery to life. The advancements in AI for design have unlocked a realm of thrilling possibilities. In this exciting landscape, Adobe has unveiled its latest offering: Generative Fill, an AI-powered tool that enables you to go from text prompt to astounding art whilst being content aware. With the power to swiftly create, add, remove, or replace images based on simple text prompts, Generative Fill pushes the boundaries of design innovation and creativity.

With such developments, we see the questions being asked:

- Can Generative Fill and similar AI tools reduce the need for designers and can marketers themselves use AI to create their marketing creatives?

- To what extent can AI produce high-quality images that satisfy all the requirements of a design brief without requiring additional external editing?

While the claims surrounding this feature may be highly valued, the true test lies in putting it to trial.

Let’s put this to test

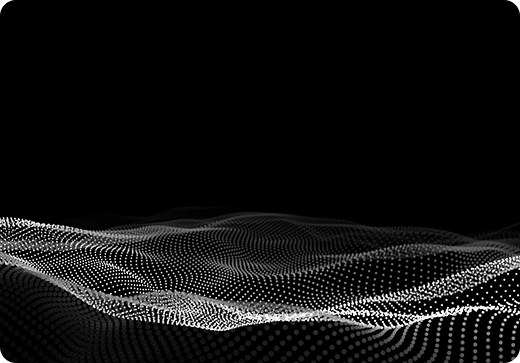

One of my clients requested a series of website banners for a cutting-edge platform that grants enterprises and SMEs exclusive access to a Virtual Metaverse Appearance (VMA) through VR headsets. The brand's core belief revolves around harnessing the potential of the Metaverse to empower their customers, unlocking unprecedented levels of productivity, creativity, and innovation. It was crucial for the banners to evoke these emotions and align with the given brief. My goal was to capture the essence of virtual reality and the metaverse, while showcasing technological advancement and pioneering design, with a predominant blue color palette to harmonize with the brand's established visual identity.

Breaking down the approach

1. Choosing Reference Images: I searched for relevant images using keywords like "virtual reality headset," "space," "neon blue," "purple," "starlight," and "gateway," importing them into Photoshop as a base to provide a reference.

2. Providing Text Prompt: I initially experimented, rephrased, and modified prompts to approach the desired outcomes. Using design theory fundamentals allowed me to articulate precise directions, taking into consideration factors such as visual style, mood, and brand identity. By applying design principles, I was able to effectively guide the AI in terms of color palettes, typography, and overall aesthetics, resulting in the generation of visually pleasing images.

3. AI Generation: Generative fill generates multiple image options per prompt, providing me with a wide range of possibilities to explore and select the most fitting images for my project. With each generation, I gradually approached my desired result, fine-tuning the output to meet my specific needs. Choosing the right generated image from the multitude that the AI provided is crucial. Here, having an understanding of the brand personality and identity becomes valuable in making informed decisions. A designer's eye also proves beneficial in identifying areas that may require further editing and discerning which elements are already well-aligned with the intended design direction.

4. Fine-tuning: I chose specific areas to extend images and make them fit the required dimensions. I also identified smaller sections in the images that needed further editing and replaced the content accordingly. This allowed me to refine the composition and achieve the desired visual outcome.

Challenges Faced

1. Maintaining Text Prompter history: Unlike ChatGPT, where it maintains a continuous conversation by retaining older prompts, Generative Fill in Photoshop overwrites the previous prompt when working on a particular generative layer. Once removed, the older prompts are not preserved.

Workaround: To edit the same generative layer, simply add new instructions or rephrase the previous prompt to incorporate the desired changes.

2. Working on multiple generative layers: Generative Fill takes the bottom layers into consideration as a reference when generating the image for the top layer. However, if you make edits to any of the bottom layers, there is a high possibility that the top layers will no longer align with the edited bottom layer. This creates a redundancy in working on layers.

Workaround: You can create each layer independently, allowing you to modify, arrange, and delete layers as needed without affecting the others. Alternatively, you can merge the background layers before adding objects or subjects on top. By doing so, you simplify the layer structure and minimize the risk of misalignment when editing bottom layers.

3. Art Board Size vs Generated Image Size: Generative Fill generates images that are larger than the artboard size, resulting in portions of the image getting cut off. It does not allow you to maneuver or resize the image to fit the artboard.

Workaround: You can utilize Generative Fill again to extend the image and create an extension until you achieve your desired dimensions.

4. Lack of Consistency: Generative Fill does not consistently produce the same result for the same prompt, leading to a lengthy trial-and-error process.

While there might not be a direct workaround for this limitation, embracing the iterative nature of the process and being open to exploration and experimentation can help uncover unique and unexpected results. It's important to approach the tool with flexibility and view the variations as opportunities for creative exploration rather than strict limitations.

Our Final Results:

Created using only Generative fill tool:

Created using only Generative fill tool:

Used Generative fill and manually edited some parts:

Used Generative fill and manually edited some parts:

It is important to acknowledge that AI, including Generative Fill, is far from perfect. Relying solely on Generative Fill for image creation may not always yield the desired results. However, with the ability to manipulate, modify, and enhance the generated images using Photoshop, it allowed me to enhance imperfect generated images, surpassing the limitations of AI and achieving desired outcomes.

Non-designers can still harness the power of tools like Generative Fill to explore the possibilities of AI and expand their creative horizons. However, they may face challenges along the way, such as understanding visual aesthetics and achieving their desired outcomes. While the designs generated might be visually appealing, they may lack effectiveness in achieving specific goals.

For instance, in the case of the website banners I designed, the ultimate objective was to attract viewers' attention and convert them into platform users. Relying solely on a trial-and-error approach to generate visually pleasing images does not guarantee maximum productivity. A designer is able to guide the AI in a methodical fashion by providing prompts informed by knowledge of design theory, user behavior, and the brand's personality. This approach results in successful designs that not only align with the requirements of the brief but also possess aesthetic appeal, ensuring high-quality output and efficiency.

AI tools like Generative Fill are exciting features for non-designers to explore and create experimental outcomes. However, when creating purposeful designs, designers are capable of producing more refined and purposeful results using AI. Our expertise in design theory, aesthetics, and technical skills is indispensable. The key lies in embracing the collaboration between human creativity and AI technology to achieve remarkable results.

Bhavya Shah is a graphic designer focused on brand strategy and fascinated by UX research. She thrives on combining research, data, and design to create impactful visual solutions.